Table of Content

Blog Summary:

This post gives you complete details about the AI tech stack, which is necessary to harness the full potential of AI technology and foster the growth of your business. Whether you want to gather basic information regarding generative AI tech stack, components, AI, tech stack layers, programming languages, or AI stack stages, you can explore everything here.

Table of Content

As per a survey conducted by Statista, the market size of Artificial Intelligence (AI) is projected to go beyond $1.8 trillion by 2030. It reflects the growing usage and demand of AI, especially in today’s competitive edge.

Organizations must adopt this technology to streamline their operations and stay competitive. Meanwhile, the most crucial thing they should be versed in is the AI tech stack.

Without getting detailed information regarding the AI tech stack, businesses can’t optimize processes, drive innovation, and gain a competitive advantage. Whether you are a small company or an established market player, you can find here everything about the AI tech stack. So, let’s start our discussion without wasting any more time.

What is Generative AI Tech Stack?

A generative AI tech stack includes frameworks and tools necessary for creating an AI system that can create content, be it images or text. Deep learning frameworks like PyTorch and TensorFlow are the backbone of the entire AI system. They provide a greater foundation for building and training models.

It includes architecture models such as GPT, BERT, etc. The system can manage data effectively by using tools like HDFS and Apache Kafka. The training process generally depends on the most powerful architecture, including TPUs and GPUs, and various cloud platforms, including Google Cloud and AWS.

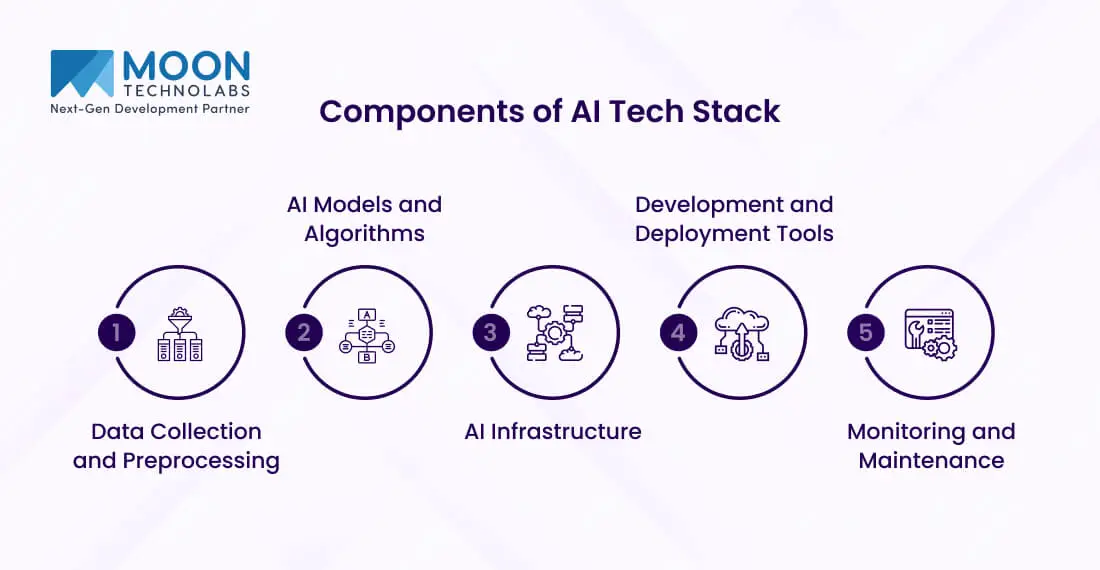

Components of AI Tech Stack

A gen AI tech stack boasts a multi-layer structure that includes a range of components necessary to develop, deploy, and maintain various AI applications. Let’s have an in-depth look at all the components:

Data Collection and Preprocessing

Since the entire AI system works on data, high-quality data is a key element. It processes data from a range of channels, such as web scraping, APIs, databases, etc. Integration of these multiple data sources into a single data repository or source is necessary.

It’s possible with the use of the right data integration tools and various platforms that can handle larger volumes of data and offer seamless access.

Raw data is incomplete, so it should undergo a complete cleaning and transformation process. Data cleaning involves removing duplicates, handling missing values, and correcting errors.

The transformation process is essential for the standardization, normalization, and encoding of several categorical variables. These steps ensure that data is fed into AI models, which ensures accuracy and consistency.

AI Models and Algorithms

ML (machine learning) models are the pillars of any AI app. Whether it’s decision trees, linear regression, or support vector machines, these models are designed to learn patterns from data and make predictions based on them.

The right model is selected based on the nature of the data and the required interpretability and accuracy. Deep learning is a subset of ML that involves neural networks with a large number of networks.

Architectures like Recurrent Neural Networks (RNNs) are appropriate for sequential data such as natural language. Meanwhile, Convolutional Neural Networks (CNNs) are the right option for image process tasks.

AI Infrastructure

The computation demands of AI make it mandatory for robust hardware. Graphics Processing Units (GPUs) are mostly used due to their vast capability of performing parallel computation most efficiently.

Tensor Processing Units (TPUs) are another popular component of AI infrastructure. They’re designed specifically to catalyze ML workloads. This hardware effectively minimizes the overall time needed to train complicated models.

Cloud services offer a fully flexible and scalable infrastructure for AI development and deployment.

Many top-rated providers, including Microsoft Azure, Amazon Web Services (AWS), Google Cloud, etc., provide AI and ML services that include managed services, pre-configured environments, and various tools for processing, data storage, and analysis.

The major advantage of using cloud platforms is that they allow organizations to access robust computing resources without the necessity for substantial upfront investment in physical hardware.

Development and Deployment Tools

Frameworks and libraries are pivotal for creating necessary AI models. As mentioned, TensorFlow, one of the popular deep learning frameworks, is known for its scalability and robustness.

PyTorch is popular for its ease of use and various dynamic computation graphs. Both frameworks include pre-trained models, vast libraries, tools for training model building, etc.

Machine Learning Operations (MLOps) tools can streamline the overall deployment and management process of ML models. These tools can support the lifecycle of ML models, from development and training to monitoring and deployment.

MLOps practices are useful when it comes to automating various repetitive tasks, enhancing model reproducibility, and thus ensuring consistency across multiple environments.

Monitoring and Maintenance

After deployment, it’s essential to monitor AI models continuously to ensure that they deliver performance as per expectations. This process involves monitoring several important metrics, such as precision, accuracy, F1 score, and recall.

Performance monitoring is helpful when it comes to identifying drifts in model accuracy due to changing data patterns, which is needed to maintain model reliability and effectiveness.

Regular updates and retaining its AI model are essential to adapting to new data and changing environments. For continuous improvement, you need to perform various activities, such as periodic retraining using fresh data, exploring new model architectures, hyperparameter tuning, etc.

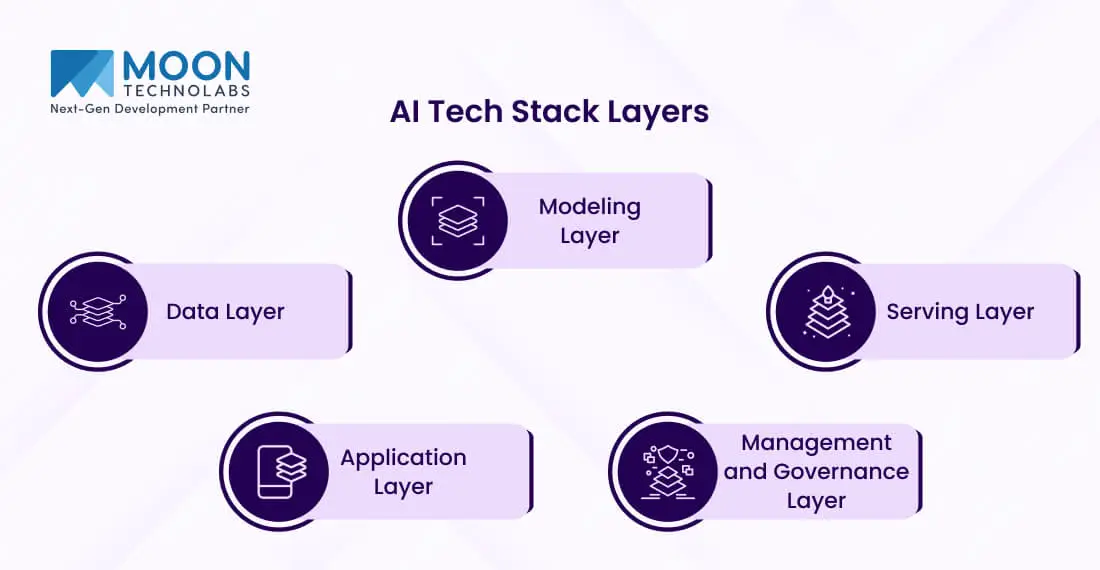

AI Tech Stack Layers

There are different categories of AI tech stack. Each layer plays an important role in the life cycle of AI apps. Let’s get a complete overview of multiple layers of the AI tech stack:

Data Layer

As one of the crucial layers, the data layer can handle the collection, management, and storage of data, which improves the entire AI model. Data storage solutions need to be secure, scalable, and efficient.

Some of the common storage solutions include many relational databases like PostgreSQL and MySQL, MongoDB, Amazon S3, Cassandra, and many others.

After proper data storage, one needs to process and transform it into a complete format, which is appropriate for AI models.

Many data processing frameworks, such as Apache Spark, Apache Hadoop, and others, offer robust tools for large-scale data processing. ETL tools, such as Talend, NiFi, and Informatica, are also needed to move and transform data from multiple sources into a completely unified format.

Modeling Layer

The modeling layer generally focuses on creating and training several AI models. Numerous model training platforms, such as PyTorch, TensorFlow, Scikit-learn, and others, are used to create ML and deep learning models. These platforms are essential because they provide various pre-built algorithms, neural network architectures, and various utilities to make the model development process simple.

Moreover, various cloud-based services like Amazon SageMaker, Google AI Platform, and Azure Machine Learning offer a scalable environment for training models on large datasets. Selecting the top model is vital for improving the AI system’s performance. To select the right models, you can use various techniques such as grid search, cross-validation, random search, and more.

These techniques are also essential for hyperparameter tuning. Various automated ML tools, including Google AutoML, H2O.ai, and Microsoft Azure AutoML, can smooth the entire process by automatically selecting and training models according to the provided task and dataset.

Serving Layer

The serving layer is responsible for deploying various trained models into a full production environment. It involves creating a complete infrastructure that can handle various requests. It is amazing at handling different requests and serving predictions accordingly.

Model deployment platforms like TorchServe, TensorFlow Serving, and others offer a fully optimized environment for various serving models at scale. API integration is necessary to make AI models fully accessible to various applications. Some of the standard approaches are GraphQL and RESTful APIs for exposing model endpoints.

Many tools, such as Django REST frameworks, FastAPI, Flask, and others, can also simplify the overall creation of API endpoints for different AI models. Besides, many cloud services like Google Cloud Functions and AWS Lambda ensure serverless deployment of different AI models while minimizing the overhead of managing servers and infrastructure.

Application Layer

The application layer, as the name suggests, reflects that AI models are embedded into end-user apps. These apps range from various recommendation systems to computer vision applications and predictive analytics tools. You can integrate AI capabilities into apps to improve their functionality and offer a more personalized user experience.

The success of any AI-based app mainly relies on both UI and UX designs. So, you need to craft the most effective UI/UX design that ensures the accessibility of many AI features. Many tools, such as Angular, React, and Vue.js, are common for creating responsive and fully interactive user interfaces.

Management and Governance Layer

A management and governance layer includes several practices & policies that are necessary to ensure full security & compliance of various AI systems. Data security is important and requires powerful encryption, monitoring, and access controls for the protection of various sensitive details.

Ensuring compliance with several regulations like CCPA, GDPR, and HIPAA is also important, especially for many industries that handle personal and sensitive data. Effective lifecycle management is necessary to ensure AI models remain relevant and accurate with time.

It includes the overall process of retraining, continuous monitoring, and also updating several models as new data becomes available. MLOps practices are implemented to automate and make the deployment process, maintenance, and monitoring of AI models hassle-free.

Get Expert Consulting to Build Your AI System

Harness Our Immense Expertise in Building a Powerful AI Tech Stack

Programming Languages for Generative AI

It’s important to choose the best programming language for effective AI development. While selecting the programming languages, you need to focus on several factors, such as community support, ease of use, performance, and more. Let’s explore here some of the programming languages you can leverage for generative AI:

Python

Being one of the well-known programming languages for AI development, Python is popular for being simple and also having a range of frameworks and libraries. It facilitates both AI and ML tasks. Some of them include Keras and TensorFlow.

As a high-level neural network based on Python, Keras can run on top of TensorFlow. It ensures quick and easy prototyping and supports both recurrent and convolutional networks. It runs perfectly on GPUs and CPUs.

TensorFlow, an open-source deep learning framework developed by Google, lets developers create and implement perfect machine learning models efficiently. Its flexible architecture enables users to implement various computations across a range of platforms, such as TPUs, GPUs, and others.

R

R is another programming language used for graphics and statistical computing, which is why it has gained popularity in the field of data science. R excels in statistical modeling and provides a wide array of graphic techniques, models, tests, and more. It has emerged as the most powerful tool for analyzing data and also delivers great insight.

R is also known for its amazing graphical abilities, with certain libraries, such as ggplot2, which makes it convenient to visualize data. Understanding complex datasets and the performance of various AI models is important.

Though R is less frequently used than Python in AI, it does integrate with multiple AI frameworks. TensorFlow comes with an R interface, which allows R users to create and train ML models with its capabilities. Keras is the same as TensorFlow and also has an R package. It allows users to use higher levels of various neural networks API within the R environment.

Java

If you are in search of a high-performance language, you should go with none other than Java. It’s better known for its reliability and scalability, which makes it a top choice for enterprise-level apps. Java is popular for its ability to run smoothly on any platform other than Java Virtual Machine (JVM).

Java is also architecture-neutral, which is why it is versatile and necessary for many large-scale AI apps that need various crucial computational resources.

Another significant advantage of Java is that it’s faster than other programming languages and offers powerful memory management. Due to its higher performance and stability, Java is used in many enterprise apps. Whether you seek to develop large-scale financial systems, scientific apps, or eCommerce platforms, you can use Java to develop a range of software.

Julia

Julia is another top-level programming language for technical computing. Its syntax is familiar to users of several other technical computing environments. Julia excels in numerical computing and offers the same level of performance as low-level languages such as C and Fortran. This makes it suitable for apps needed for intensive mathematical computations.

Julia is gaining vast popularity in the AI community mainly due to its higher performance and also ease of use. Flux.io, a machine learning library for Julia, provides a fully flexible and simple API for the development and training of neural networks.

Julia has been gaining traction in the AI community due to its performance and ease of use: It offers a consistent interface for a range of machine learning models, which makes it convenient to experiment with different techniques and algorithms.

Other Languages

C++ is better known for its outstanding performance and is also used in scenarios where speed and optimization are vital. The programming language ensures fine-grained control over system resources and memory management, which is why it’s important to optimize the performance of AI models.

JavaScript is the other top-rated web programming language, and its title is AI is growing, specifically for front-end apps. With JavaScript, developers can run AI models directly in the browser. It works effectively when it comes to developing interactive AI-powered web apps that offer real-time feedback to many users.

AI Stack Stages

AI development involves several unique stages. Every stage is crucial in order to build a top-rated AI system. Let’s grasp each stage in detail;

Data Preparation Stage

Data preparation is the first and most important stage for any AI project. In this stage, raw data is collected, transformed, and cleaned into a complete format that is appropriate for any analysis.

The quality of the data can impact the model’s performance, making this stage pivotal. Data preparation includes several activities, such as data collection, data cleaning, data transformation, and data splitting.

Model Development Stage

After preparing the data, model development is the next stage. It involves selecting the right algorithms and various training models to learn from the data. It includes various important activities such as algorithm selection, model training, hyperparameter tuning, and model evaluation.

Based on the nature of the problem, you can select the best deep learning and machine learning algorithms. The training data must be used to teach the model to recognize several patterns and make predictions.

Hyperparameter tuning can adjust to various parameters that can govern the entire training process to improve the entire model’s performance.

Deployment Stage

Deployment is the next stage after the successful completion of development. This stage involves integrating the model into a full production environment and making real-time predictions or decisions. Let’s explore some of the crucial activities, such as model integration, API development, scalability, and security.

Model integration is all about embedding models into different apps, services, or systems where they function. You can create several interfaces that enable many other systems to fully interact with the model.

When it comes to scalability, it ensures the system is capable of handling the increased loads and also can perform with efficiency with the increase of demands. Implementing security measures is essential for protecting the data and model from unauthorized access.

Monitoring and Optimization Stage

After deployment, continuous optimization and monitoring are necessary to maintain the model’s performance over time. This important stage involves performance monitoring, error analysis, model retraining, and resource management.

Performance monitoring involves tracking models’ performance and prediction metrics in real-time. It helps detect issues like data drift or model degradation.

Error analysis involves investigating and analyzing several mistakes that models make to identify various underlying issues.

Model retention is another significant task that involves updating the model with new data to enhance its accuracy and adapt to changing conditions. Resource management is all about optimizing various computational resources to ensure an efficient model operation.

Feedback and Improvement Stage

This is the final stage, which serves its purpose of gathering feedback from users and ensuring continuous improvement. It involves various activities such as user feedback, iterative development, A/B testing, documentation, and reporting. User feedback is all about collecting crucial insights and getting required suggestions from users who interact directly with the AI system.

You can incorporate feedback and thus make iterative adjustments to enhance the overall performance of the model. A/B testing involves conducting experiments to compare different versions of the model and also to determine the high-performing one. You can have a complete record of changes, performance, and updates.

Check Out These Resources:

Explore our MLOps Consulting Services for efficient AI workflows.

Conclusion

So, now you have complete information regarding generating an AI tech stack. It’s advisable to get professional assistance and implement the generative AI tech stack in your business to stay ahead of the curve. It helps you unlock several new growth opportunities. You must invest in the best tools and infrastructure to create and implement generative AI models.

FAQs

01

What is the full stack for AI?

Full-stack AI includes data collection, preprocessing, storage, model development, training, evaluation, deployment, and monitoring. It also includes several important components, such as machine learning frameworks, data pipelines, cloud infrastructure, APIs, and user interfaces, for seamless integration.02

How to build an AI stack?

To develop an AI stack, you can integrate data gathering, storage, processing, model training, and deployment. You can leverage several tools, such as TensorFlow, Python, cloud services, etc., to build an AI stack.03

What is an example of a tech stack?

The most popular example of a tech stack is the LAMP (Linux, Apache, MySQL, and PHP. A tech stack can be either front-end or back-end, or a combination of both.04

What is the most advanced AI technology in the world?

GPT-4 is the most advanced AI technology in the world.Submitting the form below will ensure a prompt response from us.